US Air Force and Stanford test AI copilot in real flight emergencies

For all the automation built into modern aircraft, the most critical decisions in an emergency still rest with the human in the cockpit, often under crushing time pressure.

That unforgiving reality is what drew researchers from Stanford University into an unusual collaboration with the US Air Force Test Pilot School: placing an artificial intelligence “co-pilot” in the cockpit to assess whether it could help pilots make safer decisions when seconds matter most.

The project, conducted through the DAF-Stanford AI Studio, has moved well beyond theory. After months of simulator trials, the system was flown aboard a Learjet 25 by Air Force test pilots, marking a rare instance of generative AI being evaluated in real aircraft emergency scenarios rather than purely laboratory or simulator settings.

How Stanford designed an AI copilot for flight emergencies

The idea originated in Stanford’s Intelligent Systems Laboratory, led by Mykel Kochenderfer, an associate professor of aeronautics and astronautics whose work focuses on decision-making in safety-critical environments. For Kochenderfer, the challenge is also personal.

“Pilots train intensely for emergencies, but accident databases show that many mishaps stem from human error,” he said. “If we can get the right information to the pilot as quickly as possible, we can significantly improve safety.”

Rather than attempting to replace pilot judgement, the team set out to build an assistant designed to work quietly in the background, reducing workload precisely when cognitive stress peaks.

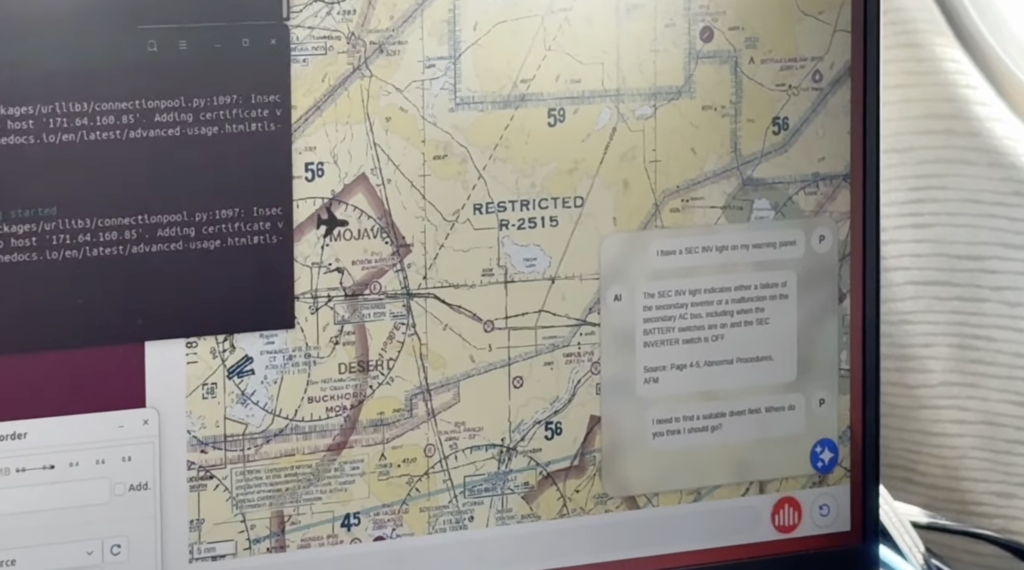

The result is a tablet-based system running on an iPad, using retrieval-augmented generation, effectively an aviation-grade “Ctrl + F”, to scan flight manuals, emergency checklists, and technical documentation in seconds.

How AI could cut response times during cockpit emergencies

Marc Schlichting, a PhD candidate on the project, said time is the most unforgiving constraint in the cockpit.

“When a pilot spots an anomaly, such as a warning light, time is always the limiting factor,” he explained. “Normally, they would flip through checklists and manuals to diagnose the issue. The assistant can scan those sources and return guidance within seconds. It may not sound like much, but in an emergency, those seconds matter.”

The team was acutely aware of the risks associated with generative AI, particularly the danger of confidently delivered but incorrect outputs. Kochenderfer said a substantial portion of the development effort focused on reducing that risk and ensuring the system’s responses could be trusted under maximum stress.

Pilots tested an AI copilot in high-stress flight scenarios

Before ever flying, the system was pushed hard in Stanford’s full-motion research simulator. The six-degrees-of-freedom platform recreates aircraft motion, vibration, and control forces, allowing researchers to stage rare, cascading failures too dangerous to attempt in real flight.

Schlichting described the scenarios bluntly as “a pilot’s nightmare in a controlled setting”, combinations of failures designed to overwhelm attention and test whether AI assistance genuinely helps rather than distracts.

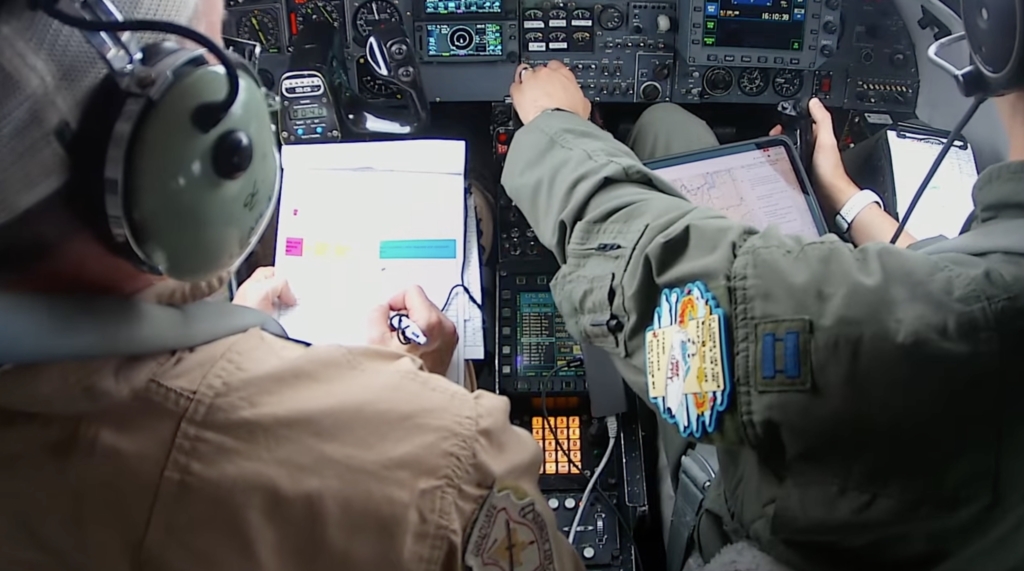

Only after that phase did the project move to Edwards Air Force Base, where 24 Test Pilot School pilots flew a Learjet 25 through two carefully designed emergency scenarios, once without the AI assistant and once with it. The goal was not to prove the AI superior to human judgment, but to measure its impact on workload, diagnosis speed, and decision quality.

What flight testing revealed about pilots using AI assistants

Major John “Heater” Alora, director of operations at the DAF-Stanford AI Studio, said the partnership was a natural extension of both organisations’ missions.

“Stanford is advancing the frontier of AI and autonomy, while TPS is the nation’s leading institution for testing new flight-system technologies,” he said.

For the pilots, the flights offered insights into human behaviour as much as software performance. Captain Jorge “FAIR” Cervantes, a TPS student involved in the tests, said the experience revealed subtle but important patterns.

“The flights helped me better understand how pilots chose to interact with the assistant, what information they trusted, and what follow-up questions they asked under pressure,” he said. Researchers say those insights will shape how future AI copilots are designed and introduced.

What AI copilots could mean for commercial aviation

While rooted in military test flying, the work has implications well beyond combat aviation. Alora noted that AI copilots could support long-duration military missions while also improving safety and workload management in commercial cockpits, where crews face increasing automation and information density.

The results of the flight trials are still being analysed, with a detailed academic paper expected later this year. In parallel, the Stanford team is already iterating on a next-generation version of the assistant, adding voice interaction and vision capabilities to better align with how pilots naturally work.

“Each phase of testing was a baby step toward building the confidence required to deploy AI assistants responsibly in safety-critical environments,” Kochenderfer said. The end goal, he added, is simple: “to make flying safer for everyone.”

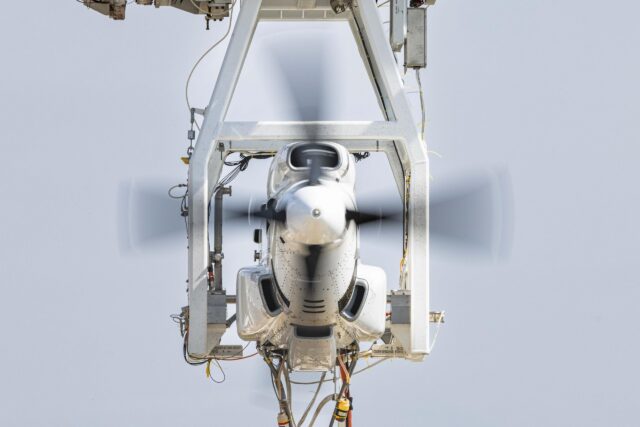

Featured image: Stanford / USAF